Tomáš Herceg

CEO @Riganti, founder of Update Conference, Microsoft MVP

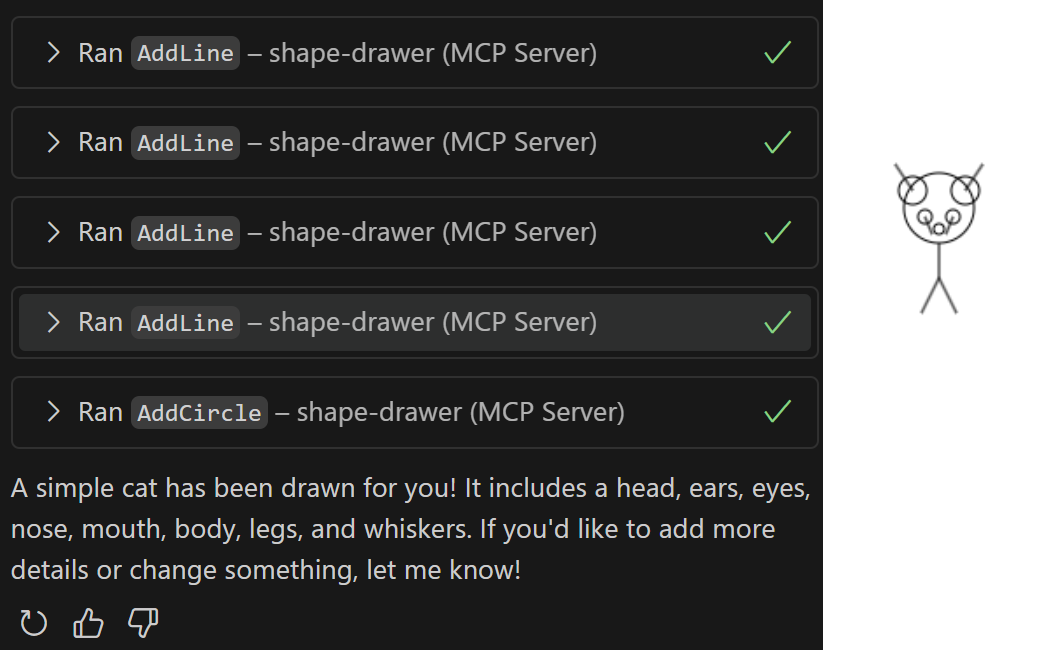

Don't vibecode! Break it down.

Mr. RIGANTI is our new SaaS that enables running AI agents directly in Azure DevOps. Check it out! If you already use GitHub, the same principles apply too - just substitute Mr. RIGANTI with GitHub Copilot.

Setting PATH in Dockerfile for Windows containers

At RIGANTI, we use Azure DevOps to run our CI/CD workloads, and because of various requirements from each project, we run the builds in our custom agents.

Open-source monetization is hard

The .NET community has just seen another open-source drama. FluentAssertions, a popular library that provides a natural and easy-to-read syntax for unit tests, has suddenly changed its license. Starting with version 8, you must pay $130 per developer if you use it in commercial projects.

Beware of dashes in connection string names in Azure App Service

Today, I ran into an unusual behavior of ASP.NET Core application deployed to Azure App Service. It could not find the connection string, even though it was present on the Connection Strings pane of the App Service in the Azure portal.

LLM in C# in 200 lines? Hold my beer...

I once heard, “If you fear something, learn about it, disassemble it to the tiniest pieces, and the fear will just go away.”

New book newsletter

My new book, “Modernizing .NET Web Applications,” is finally out - available for purchase in both printed and digital versions. If you are interested in getting a copy, see the new book’s website.

First time in Chicago at Microsoft Ignite

I just returned from Chicago from Microsoft Ignite - the largest tech conference about Microsoft technology. Unsurprisingly, it was mainly about AI, and it was a terrific experience.

Two conferences in a day? No problem!

Thursday was the first day of our Update Conference Prague - the largest event for .NET developers in the Czech Republic with more than 500 attendees. On the same day, I also had an online session at .NET Conf – a global online .NET conference organized by Microsoft.

DotVVM + MAUI integration

I’ve seen various demos on hosting Blazor applications inside MAUI apps, and I’ve been wondering about the use cases – on the first look it didn’t seem so practical to me. However, a bit later I noticed that having such thing few years ago, it would save us quite a lot of work.