We have started using Microsoft Teams in my company. Despite the fact the product is not mature in some areas yet, I like it more than Slack we used before, mostly because the Office integrations - files, OneNote sheets in tabs in each channel are just a great idea. But that’s another story.

Recently I was on some hackathong and got an idea. Every day at 11 AM, we have the same discussion at work - it goes something like this:

“Hey guys, what about having a lunch?”

“OK, but who else is coming?”

“I don’t know, AB is not here yet, CD won’t come today…”

“OK, so ask AB if he is on the way.”

“And which restaurant we will go?”

“I don’t know, what do they have today at EF’s?”

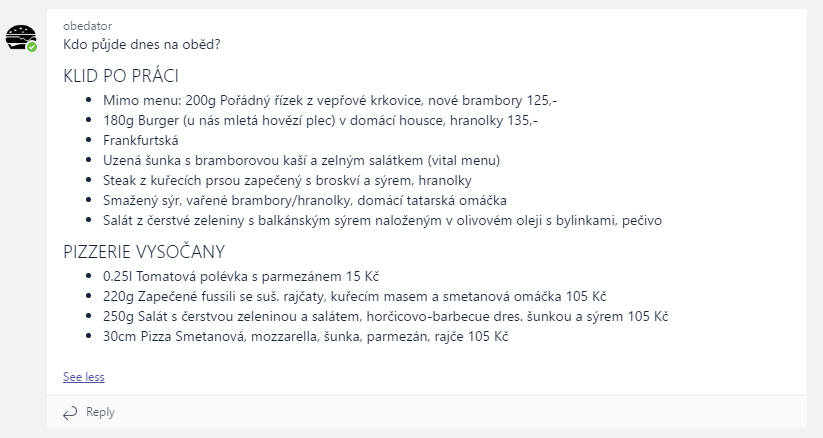

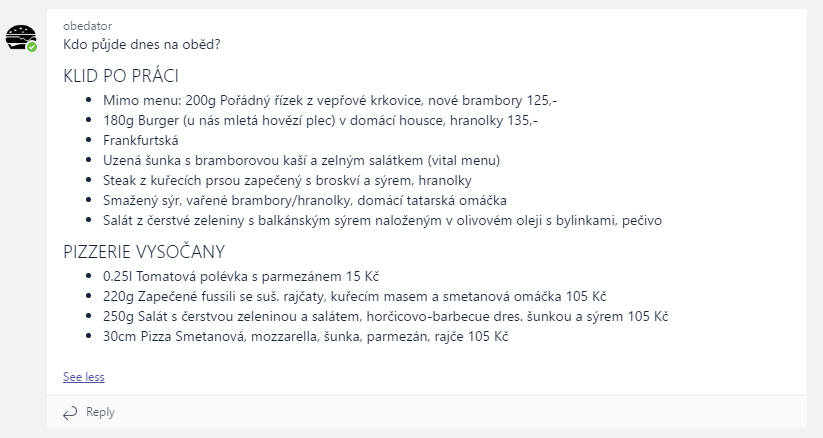

So I have decided to build a chat bot which posts a message every day at 11 AM to a specific channel on Teams. It will grab the menu of several restaurants nearby and post it in the channel (sorry, the image is in Czech: it is a lunch menu of two restaurants near our office):

And because I haven’t time to play with Azure Functions yet, I have decided to build the bot using the functions. Well, that wasn’t the only reason acutally. Since the bot doesn’t need to run all the time and basically, it is a function that needs to be executed every day at a specific time, Azure Functions is the right technology for this task. And it should be very cheap because I can use the consumption-based plan.

Bot Builder SDK and Azure Functions

Unfortunately, there is not many samples of using Bot Builder SDK with Azure Functions.

I have installed Visual Studio 2017 Preview and the Azure Function Tools for Visual Studio 2017 extension, so I can create a classic C# project and publish it as Azure Functions application.

To build a bot, you need to register your bot and generate the application ID and password. You can enable connectors for various chat providers, I have added the Microsoft Teams channel.

On the Settings page, do not specify the Messaging endpoint yet. Just generate your App ID and password.

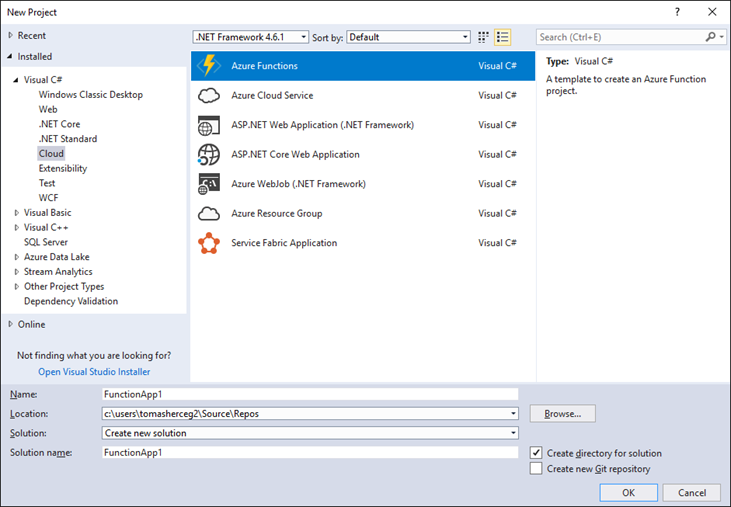

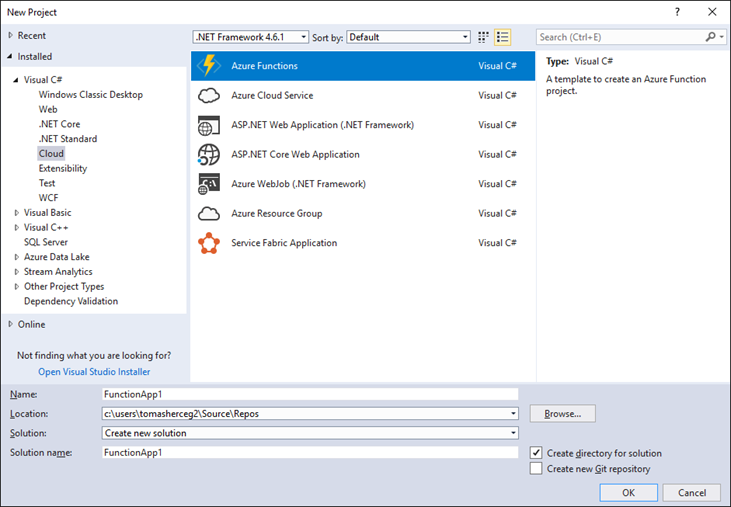

Then, create an Azure Functions project in the Visual Studio.

You will need to install several NuGet packages:

- Microsoft.Bot.Builder.Azure

- Microsoft.Bot.Connector.Teams

Every Azure Function is a class with a static method called Run. First, we need to create a function which will accept messsages from the Bot Framework endpoints.

[FunctionName("message")]

public static async Task<object> Run([HttpTrigger] HttpRequestMessage req, TraceWriter log)

{

// Initialize the azure bot

using (BotService.Initialize())

{

// Deserialize the incoming activity

string jsonContent = await req.Content.ReadAsStringAsync();

var activity = JsonConvert.DeserializeObject<Activity>(jsonContent);

// authenticate incoming request and add activity.ServiceUrl to MicrosoftAppCredentials.TrustedHostNames

// if request is authenticated

if (!await BotService.Authenticator.TryAuthenticateAsync(req, new[] { activity }, CancellationToken.None))

{

return BotAuthenticator.GenerateUnauthorizedResponse(req);

}

if (activity != null)

{

// one of these will have an interface and process it

switch (activity.GetActivityType())

{

case ActivityTypes.Message:

await Conversation.SendAsync(activity, () => new SystemCommandDialog()

{

Log = log

});

break;

case ActivityTypes.ConversationUpdate:

case ActivityTypes.ContactRelationUpdate:

case ActivityTypes.Typing:

case ActivityTypes.DeleteUserData:

case ActivityTypes.Ping:

default:

log.Error($"Unknown activity type ignored: {activity.GetActivityType()}");

break;

}

}

return req.CreateResponse(HttpStatusCode.Accepted);

}

}

The HttpTrigger attribute tells Azure Function to bind the method to a HTTP request sent to URL /api/messages. Bot Framework will send HTTP POST request to this method when someone writes a message to a bot or mentions it, when the conversation is updated and so on - you can see the types of activities in the switch block.

To be able to test the bot locally, you need to add the application ID and password in the local.settings.json file. You can read the values using Environment.GetEnvironmentVariable("MicrosoftAppId"):

"MicrosoftAppId": "APP_ID_HERE",

"MicrosoftAppPassword": "PASSWORD_HERE"

The SystemCommandDialog is a class which will handle the entire conversation with bot. I have created several configuration commands, so I can use /register and /unregister commands to enable the bot in a specific Teams channel. You can look at the examples to understand how the communication looks like.

Creating Teams Conversation

I have another function in my project which downloads the lunch menus and posts a message every day at 11 AM.

[FunctionName("AskEveryDay")]

public static async Task Run([TimerTrigger("0 0 11 * * 1-5")]TimerInfo myTimer, TraceWriter log)

{

try

{

string message = ComposeDailyMessage(...);

await SendMessageToChannel(message, log);

log.Info($"Message sent!");

}

catch (Exception ex)

{

log.Error($"Error! {ex}", ex);

}

}

private static async Task SendMessageToChannel(StringBuilder message, TraceWriter log)

{

var channelData = new TeamsChannelData

{

Channel = new ChannelInfo(CHANNEL_ID_HERE),

Team = new TeamInfo(TEAM_ID_HERE),

Tenant = new TenantInfo(TENANT_ID_HERE)

};

var newMessage = Activity.CreateMessageActivity();

newMessage.Type = ActivityTypes.Message;

newMessage.Text = message.ToString();

var conversationParams = new ConversationParameters(

isGroup: true,

bot: null,

members: null,

activity: (Activity)newMessage,

channelData: channelData);

// create connection

var connector = new ConnectorClient(new Uri(subscription.ServiceUrl),

Environment.GetEnvironmentVariable("MicrosoftAppId"), Environment.GetEnvironmentVariable("MicrosoftAppPassword"));

MicrosoftAppCredentials.TrustServiceUrl(subscription.ServiceUrl, DateTime.MaxValue);

// create a new conversation

var result = await connector.Conversations.CreateConversationAsync(conversationParams);

log.Info($"Activity {result.ActivityId} ({result.Id}) started.");

}

The function is called every work day at 11AM thanks to the TimerTrigger.

The conversation in Teams is created by the CreateConversationAsync method which needs the TeamsChannelData object. To specify the channel which should contain the message, you need channel ID, team ID and tenant ID.

There are several ways how to get these values - for example, you can create a special command to display these values. When you mention the bot in the Teams channel, you will be able to get these IDs and display them or write them to some log file.

public virtual async Task MessageReceivedAsync(IDialogContext context, IAwaitable<IMessageActivity> argument)

{

var message = await argument;

var channelId = message.ChannelData.channel.id;

var teamId = message.ChannelData.team.id;

var tenantId = message.ChannelData.tenant.id;

await context.PostAsync($"Channel ID: {channelId}\r\n\r\nTeam ID: {teamId}\r\n\r\nTenant ID: {tenantId}");

context.Wait(MessageReceivedAsync);

}

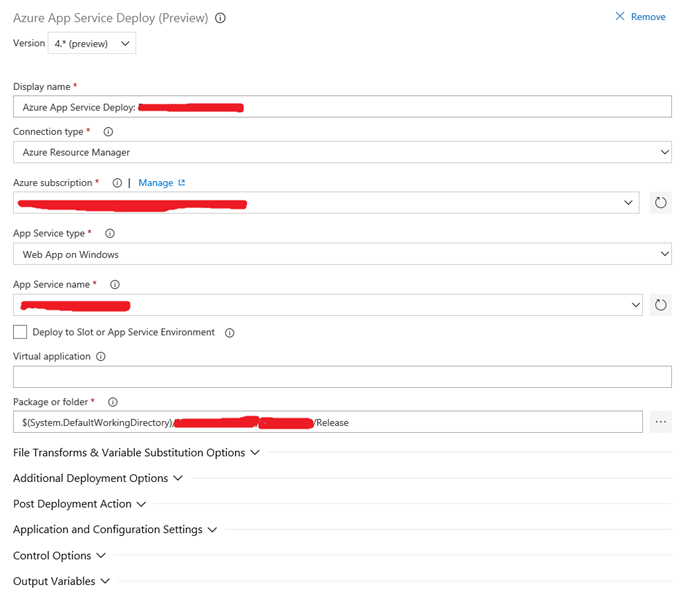

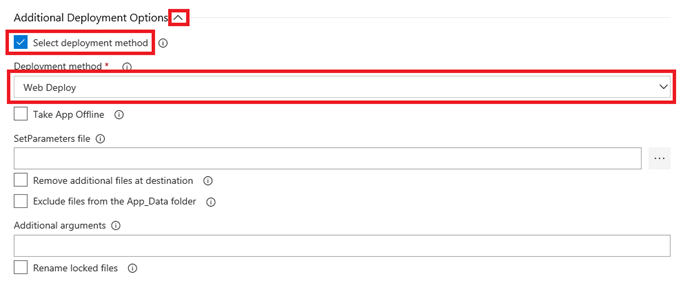

Publishing the App

You can right-click the project in the Visual Studio and choose Publish. The wizard will let you create a new Azure Functions application in your subscription.

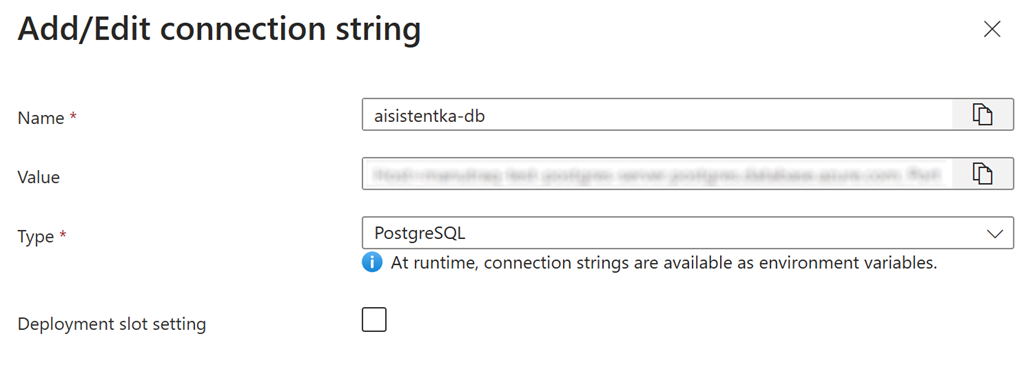

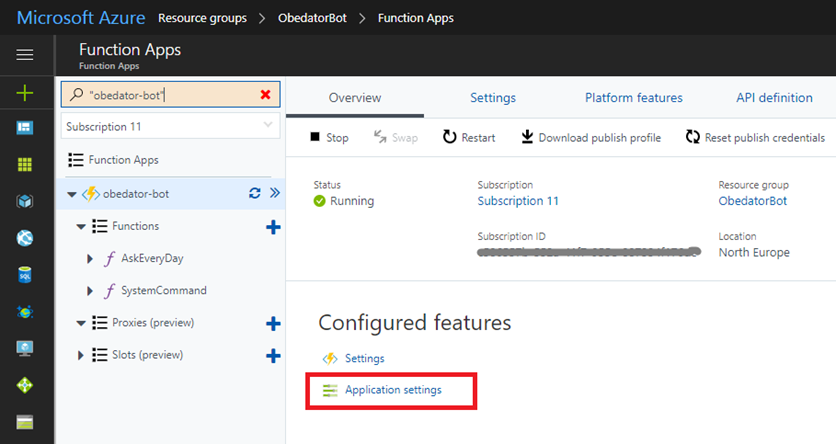

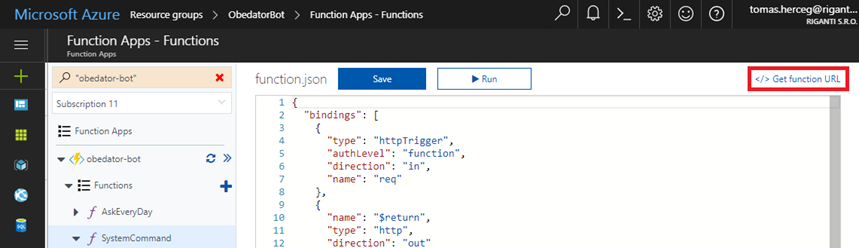

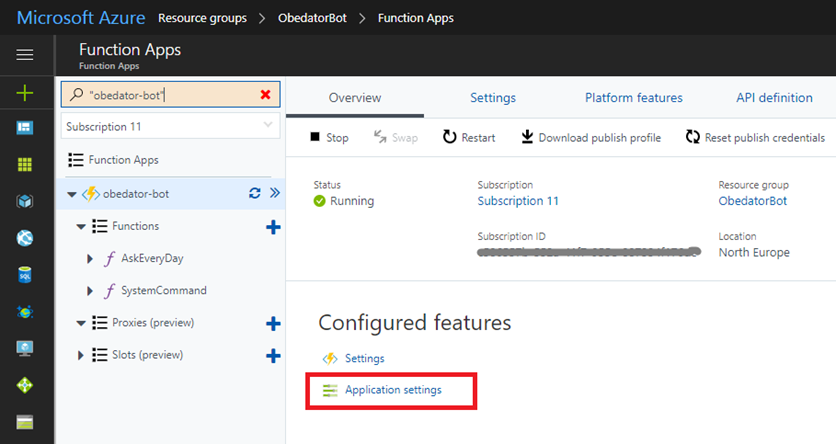

After the application is published, you need to navigate to the Azure portal. First, add the Application Settings for MicrosoftAppId and MicrosoftAppPassword.

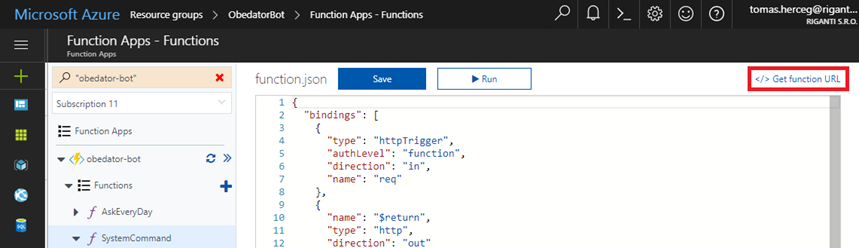

Then obtain the function URL and place it in the Messaging endpoint field in the Bot App you have registered at the beginning - it’s on the Settings page.

Sideloading the Bot App in Teams

To be able to use the bot in the Microsoft Teams, you need to create a manifest and sideload it into your team. You need to do it only once.

You need to create a manifest, add bot icons and create a ZIP archive which you will upload in the Teams app. The manifest can look like this:

{

"$schema": "https://statics.teams.microsoft.com/sdk/v1.0/manifest/MicrosoftTeams.schema.json",

"manifestVersion": "1.0",

"version": "1.0.0",

"id": "MICROSOFT_APP_ID",

"packageName": "UNIQUE_PACKAGE_NAME",

"developer": {

"name": "SOMETHING",

"websiteUrl": "SOMETHING",

"privacyUrl": "SOMETHING",

"termsOfUseUrl": "SOMETHING"

},

"name": {

"short": "BOT_NAME"

},

"description": {

"short": "BOT_DESCRIPTION",

"full": "BOT_DESCRIPTION"

},

"icons": {

"outline": "icon20x20.png",

"color": "icon96x96.png"

},

"accentColor": "#60A18E",

"bots": [

{

"botId": "MICROSOFT_APP_ID",

"scopes": [

"team"

]

}

],

"permissions": [

"identity",

"messageTeamMembers"

]

}

And that’s it.

Debugging

There are some challenges I have run into.

You can run your functions app locally and use the Bot Emulator application for debugging. You can test general functionality, but you won’t be able to test features specific to Teams (the channel ID and stuff like this).

You can debug Azure Functions in production. You can find your Functions app in the Server Explorer window in Visual Studio and click Attach Debugger. It is not very fast, but it allows you to make sure everything works in production.

And finally, there is the Azure portal with streaming log service which is espacially useful.

The only thing to be careful about is that the Functions app doesn’t restart sometimes after you publish a new version of it. I needed to stop and start the app in the Azure portal to be 100% sure that the app is restarted.